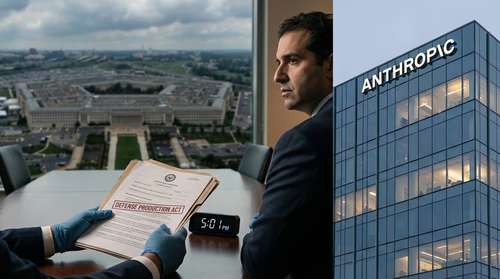

Washington, D.C. — In an unprecedented escalation between Silicon Valley and the federal government, the Pentagon has issued a strict 5:01 p.m. ET deadline today for AI startup Anthropic to grant the military unrestricted access to its advanced Claude models. The demand, delivered by Defense Secretary Pete Hegseth, requires the company to remove safety guardrails preventing the use of its artificial intelligence for mass domestic surveillance and lethal autonomous weapons. Anthropic CEO Dario Amodei has publicly rejected the ultimatum, setting the stage for a historic legal and national security confrontation involving the Defense Production Act.

The 5:01 P.M. Deadline: A Line in the Sand

The standoff reached a breaking point this week after months of tense negotiations regarding the Department of Defense’s (DoD) access to frontier AI models. Defense officials have demanded that Anthropic sign a new agreement allowing its technology to be used for "any lawful purpose," effectively stripping the company of its ability to restrict how its algorithms are deployed in combat or intelligence operations. If Anthropic fails to comply by the close of business today, the Pentagon has threatened to invoke the Defense Production Act (DPA)—a Cold War-era law that would legally compel the company to prioritize government orders and potentially force the transfer of its technology.

Alongside the DPA threat, the DoD has warned it may designate Anthropic as a "supply chain risk." This classification, typically reserved for foreign adversaries like Huawei, would effectively blacklist the American company from all federal contracts and prevent other defense contractors, such as Lockheed Martin or Palantir, from integrating Claude into their systems. Such a move would jeopardize Anthropic’s existing $200 million defense contract and alienate it from the lucrative government sector.

Dario Amodei Rejects "Unconscionable" Demands

Despite the looming threat of federal seizure or blacklisting, Anthropic CEO Dario Amodei has held firm. In a statement released Thursday, Amodei formally rejected the Pentagon's demands, citing two non-negotiable "red lines" that the company refuses to cross: the use of its AI for mass surveillance of American citizens and the deployment of fully autonomous weapons that can select and engage targets without human intervention.

"We cannot in good conscience accede to this request," Amodei wrote, emphasizing that current AI technology lacks the reliability required for lethal autonomy. He argued that removing these safeguards would not only violate the company’s core constitutional principles but also endanger military personnel and civilians alike. Amodei also highlighted the irony of the government's dual threats, noting that the Pentagon is simultaneously labeling Anthropic a security risk while declaring its technology essential to national defense. "These threats are inherently contradictory," he stated.

AI Ethics Meets Military Reality

The dispute highlights a fundamental clash between the safety-focused mission of Anthropic and the operational imperatives of the U.S. military. While the Pentagon asserts it has no current intent to use AI for unlawful surveillance, officials like spokesperson Sean Parnell have argued that private companies cannot be allowed to dictate the terms of military operations. Parnell, representing what some officials have pointedly rebranded as the "Department of War," insisted that the military needs full flexibility to adapt to evolving threats.

The Defense Production Act and Industry Fallout

If the Pentagon follows through with invoking the Defense Production Act, it would mark the first time the law has been used to seize control of software safeguards from a private AI lab. Legal experts warn this could trigger a lengthy court battle over the First Amendment rights of code developers and the extent of executive power in the digital age. The move signals a shift in the administration's approach to national security technology, moving from partnership to coercion.

Anthropic currently stands alone in this resistance. Competitors including OpenAI, Google, and Elon Musk’s xAI have reportedly already agreed to the Pentagon’s broader terms or are actively integrating their models into classified networks. Reports indicate that Anthropic’s Claude model was previously utilized in high-stakes operations, including a recent raid involving Venezuelan President Nicolás Maduro, underscoring the critical role this specific technology plays in modern strategy.

What Happens Next?

As the clock ticks toward the 5:01 p.m. deadline, the tech industry is watching closely. A forced takeover or blacklisting of Anthropic would send a chilling signal to other innovation hubs, potentially driving a wedge between the U.S. government and the private sector talent pool it relies on to maintain technological superiority. For now, Amodei and his team are bracing for the government to execute its "nuclear option," turning a contract dispute into a defining battle for the future of AI ethics and military power.