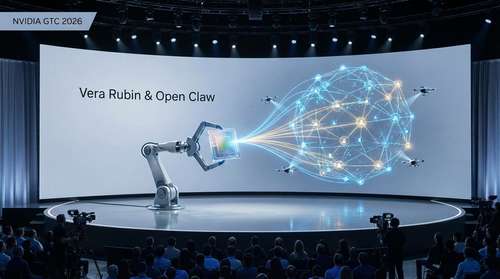

The computing paradigm has officially shifted. Speaking to a packed SAP Center in San Jose for Nvidia GTC 2026, CEO Jensen Huang unveiled the highly anticipated Nvidia Vera Rubin architecture, declaring the definitive arrival of the "Agentic AI" era. Paired with the groundbreaking Open Claw platform, this massive hardware and software rollout is specifically engineered to power autonomous AI agents capable of executing complex, multi-step workflows without human intervention.

With a staggering $1 trillion revenue opportunity projected through 2027, this week's announcements move artificial intelligence far beyond conversational chatbots. Instead, the global tech industry's focus is firmly on self-evolving software systems that can reason, independently manage long-term tasks, and drive unprecedented industrial efficiency.

The Dawn of the Nvidia Vera Rubin Architecture

Built from the ground up to handle the crushing computational demands of modern mixture-of-experts (MoE) models and reinforcement learning, the Nvidia Vera Rubin architecture represents the most vertically integrated hardware ecosystem the company has ever produced. The flagship Vera Rubin POD combines seven distinct chip types and five purpose-built rack-scale systems into one cohesive AI supercomputer.

At the core of this hardware revolution are the new liquid-cooled Vera CPUs, which boast triple the memory bandwidth and twice the energy efficiency of traditional x86 processors. These CPUs work in flawless tandem with the massive Rubin GPUs to handle the immense context memory required by always-on autonomous AI agents.

Unprecedented Scale and Groq Integration

The sheer physical scale of the new hardware is staggering. A single Vera Rubin POD houses 1.2 quadrillion transistors, integrates nearly 20,000 Nvidia dies, and operates at 60 exaflops of processing power. Perhaps the most surprising architectural shift is the integration of Groq 3 LPX processors. Stemming from a landmark December 2025 licensing deal, these specialized chips are housed in their own dedicated racks to deliver up to 35 times higher inference throughput per megawatt for real-time applications.

By tightly coupling compute, the new Spectrum-6 SPX networking, and BlueField-4 STX AI-native storage, Nvidia is enabling cloud providers to maximize token generation while drastically lowering power consumption. For enterprise clients, the Vera Rubin NVL72 rack system delivers up to a 10x reduction in inference token costs compared to the previous Blackwell generation.

Open Claw Platform: The New AI Operating System

While the silicon provides the raw computational muscle, the software ecosystem is what will truly change daily operations for everyday users and developers. During his GTC keynote, Huang drew a direct historical parallel between the new Open Claw platform and the most important software shifts of the modern era. "Open Claw has open-sourced essentially the operating system of agentic computers," Huang stated, comparing its fundamental impact to how Windows enabled the personal computing revolution and Linux enabled the internet.

Originally known in the open-source community as a viral local-first assistant, Open Claw functions as a universal operating system for autonomous AI agents. Whether acting as a localized personal secretary managing your desktop files and calendar, or functioning as a proactive corporate project manager, the software runs continuously to evaluate data and execute workflows.

Enterprise Security with NemoClaw

To make this powerful ecosystem enterprise-ready, Nvidia simultaneously launched the NemoClaw stack. This single-command installation upgrades the base Open Claw system with the new 'OpenShell' runtime. OpenShell provides the crucial privacy sandboxing, network policies, and security guardrails required for autonomous software to operate safely within corporate environments. It ensures that while an agent has the deep system access needed to be productive, it remains strictly confined by organizational data policies.

Fueling a $1 Trillion Autonomous Revolution

The transition from reactive generative AI to proactive Agentic AI is not just a technological leap; it is an economic phenomenon. Nvidia expects the ecosystem surrounding Vera Rubin and Groq LPX racks to drive a massive $300 billion annual revenue opportunity, culminating in a $1 trillion market outlook over the next few years. This explosive financial growth is driven by the reality that autonomous AI requires constant, background computation rather than simple, burst-style query responses.

Major infrastructure partners are already securing their slice of this new market. Hewlett Packard Enterprise (HPE) announced at GTC 2026 that it is rolling out comprehensive Private Cloud AI systems built entirely around the Rubin architecture. These include specialized air-gapped configurations designed for highly regulated industries like defense, sovereign finance, and healthcare, ensuring that sensitive corporate data remains strictly localized.

What This Means for the Future of Artificial Intelligence

The developments unveiled at Nvidia GTC 2026 make one thing abundantly clear: the future of artificial intelligence is no longer restricted to human prompting. It is about deploying secure, independent agents that can be trusted to execute complex, multi-stage objectives continuously.

From individual power users running private Open Claw assistants on local RTX 5090 graphics cards, to massive gigawatt-scale Vera Rubin AI factories powering enterprise ecosystems, Nvidia has laid down the ultimate end-to-end infrastructure. As chief executives worldwide scramble to develop what Huang calls their "Open Claw strategy", the broader technology sector is waking up to a profound new reality. The foundational layer for the next decade of autonomous, self-directed software has officially been built.